Curriculum Vitae

Research

Publications

Lab

Home/Research

Recent Projects

Training agents for zero-shot coordination

The Ad-hoc teammate problem, adapting to new teammates on the fly, is a bandit problem requiring the agent to balance exploration in learning about the teammates with exploitation to accomplish team goals. In this joint work with researchers from CMU and USC we investigated approaches in developing training populations to allow an agent to effectively coordinate with novel teammates.

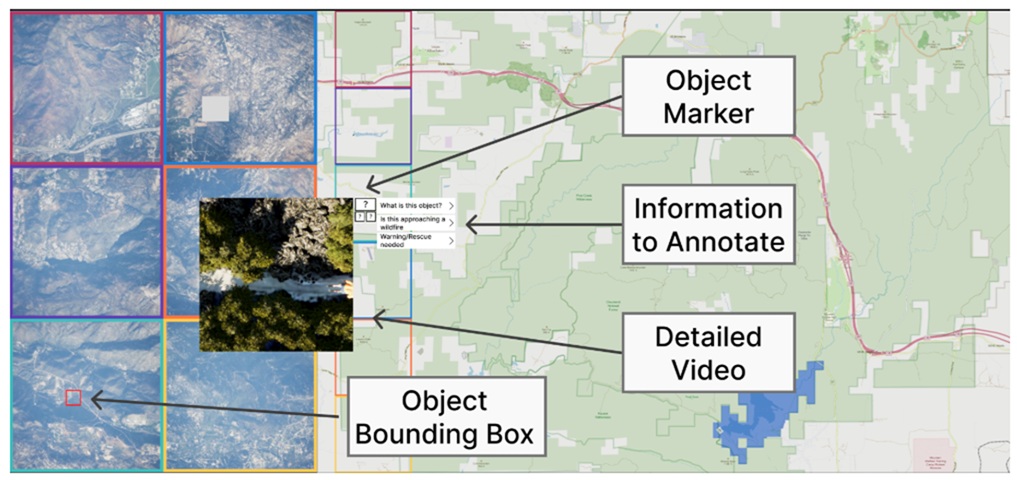

Situation Awareness in supervison of multi-UAV teams

We have been working on the problem of supervising multiple robots for the past 20 years. In the 2020's applications have been catching up and it has become more important to match research to current capabilities. In this project we took on the Wildfire XPrize task of searching 1,000 km**2 in 10 minutes using teams of class-2 UAVs. Our human-in-the-loop testing showed that while human supervisors could accurately verify detected targets they could not piece together an understanding of the evolving situation.

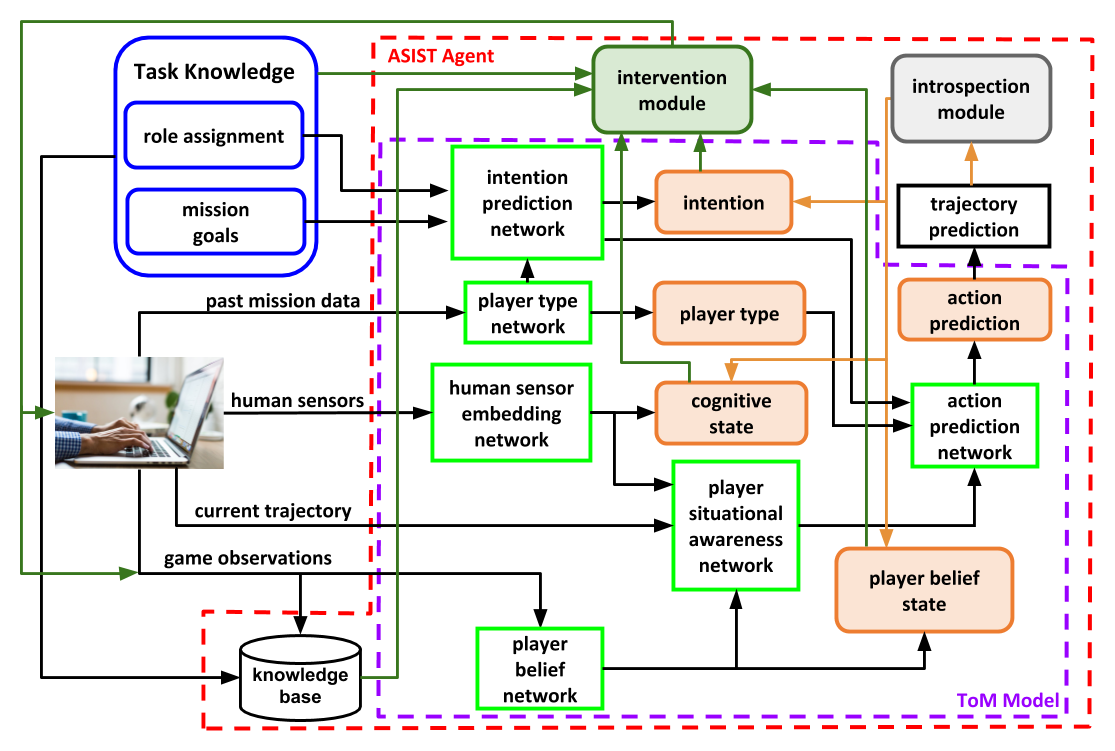

ASIST- A Robust and Adaptive Agent that Supports High Performance Teams

The ability to infer another human’s beliefs, desires, intentions and behavior from observed actions and use those inferences to predict future actions has been described as the Theory of Mind (ToM). In conjunction with researchers at CMU we are working to develop AI agents capable of making such ToM inferences: 1st year for a single human, next dyads, and by year 4 human teams with heterogeneous roles.

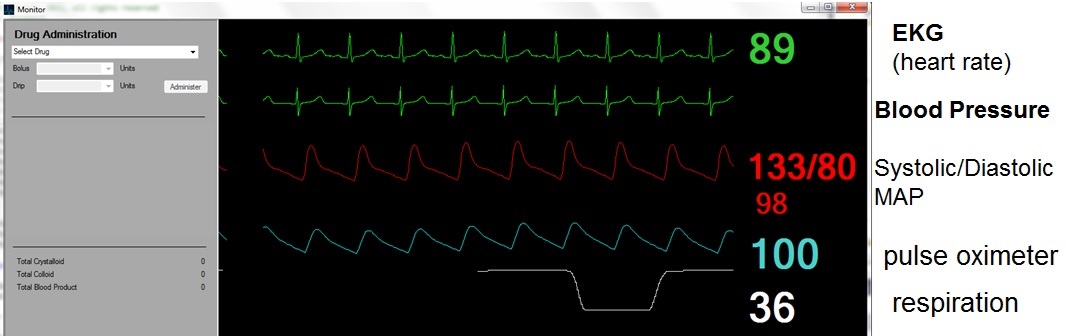

Robust Human-Machine Teaming

In the past robots were programmed to perform tasks and interact with humans. We are now exploring ways to learn optimal policies for teaming and ways to adapt robots and humans to cooperate more effectively.

Before 2020

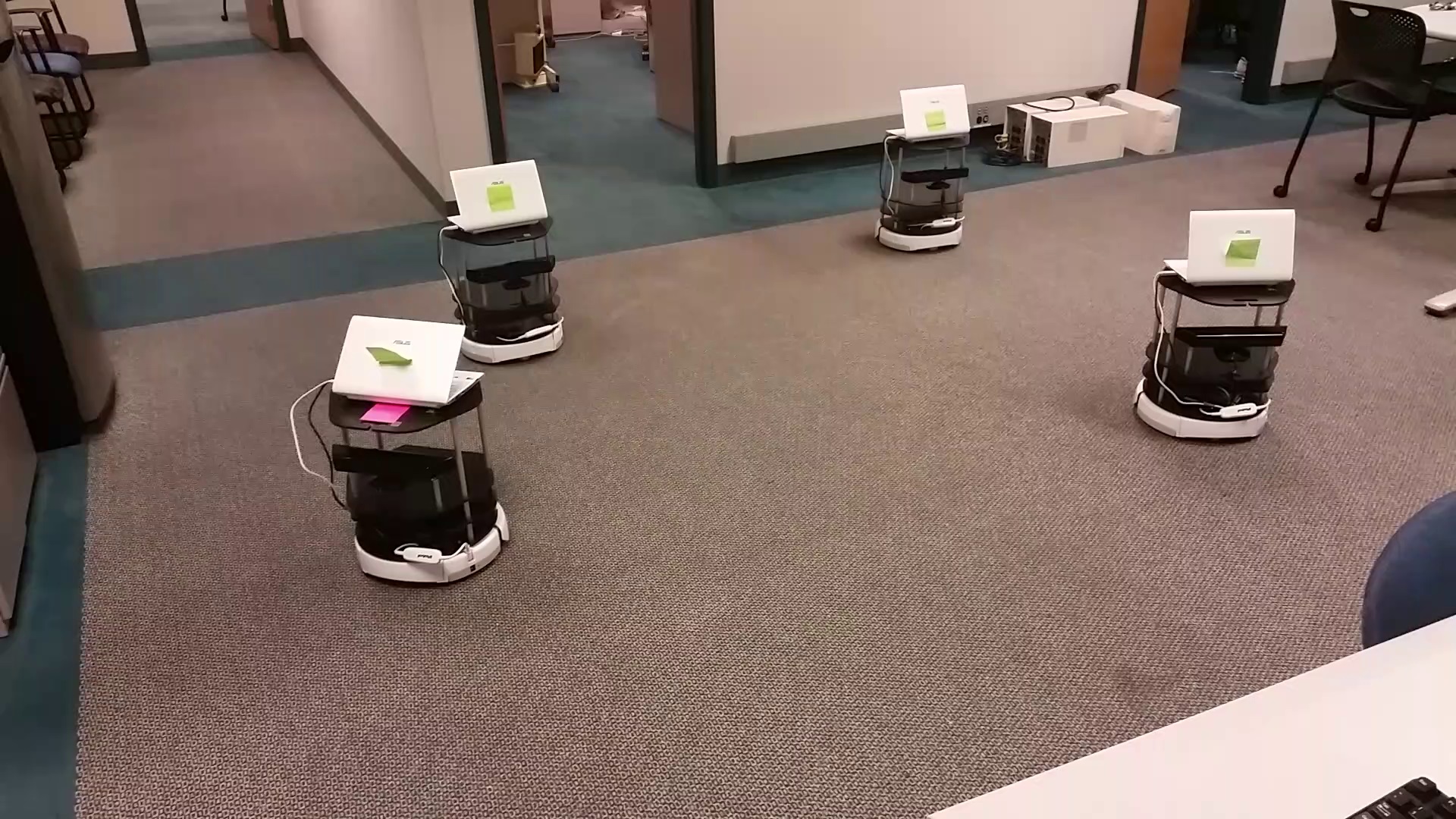

Trustworthy Interaction with Robot Swarms

Successful Interaction with autonomy depends crucially on human trust and its influence on reliance. In our work we are developing both normative models of performance based on proper calibration of trust and adaptive models by which autonomous systems can use indices of human trust to adapt their characteristics to improve performance of the overall system. While this would be challenging for a single robot we are working with very large (1,000+) swarms in CUDA-based simulations and a small swarm of 10 Turtlebots in the lab.

Successful Interaction with autonomy depends crucially on human trust and its influence on reliance. In our work we are developing both normative models of performance based on proper calibration of trust and adaptive models by which autonomous systems can use indices of human trust to adapt their characteristics to improve performance of the overall system. While this would be challenging for a single robot we are working with very large (1,000+) swarms in CUDA-based simulations and a small swarm of 10 Turtlebots in the lab.

Formal Models of Human Control of Cyber Physical Systems

The influence of Cultural Factors on Trust in Automation

This project seeks to develop a validated measure of trust in automation and investigate cultural differences in the concept and resulting behavior in samples from the US, Taiwan, and Turkey.

This project seeks to develop a validated measure of trust in automation and investigate cultural differences in the concept and resulting behavior in samples from the US, Taiwan, and Turkey.

Cognitive Compliant Command for Multirobot Teams

In this project we are developing methods for commanding robot teams of various sizes and levels of autonomy. We have conducted studies examining the feasibility of scheduling operator attention to enable supervision of multiple robots. In other work we have investigated approaches allowing human supervision of robot swarms.

In this project we are developing methods for commanding robot teams of various sizes and levels of autonomy. We have conducted studies examining the feasibility of scheduling operator attention to enable supervision of multiple robots. In other work we have investigated approaches allowing human supervision of robot swarms.

Modeling Synergies in Large Human-Machine Networked Systems

This research is mathematically and empirically based drawing on human data and models to characterize their behavior within the system. Research involved human control of multiple robots, cognitive modeling of human operators, and experiments and models of multi-human/multi-robot teams.

This research is mathematically and empirically based drawing on human data and models to characterize their behavior within the system. Research involved human control of multiple robots, cognitive modeling of human operators, and experiments and models of multi-human/multi-robot teams.

Cultural Models, Collaborations and Negotiation

Researchers from the University of Pittsburgh will lead in designing, conducting, and analyzing data from online negotiation experiments. Observing interactive negotiation is necessary to capture the processes to analyze and understand the dynamics of cooperation and negotiation and tipping points that could lead to beneficial or disastrous effects.

Researchers from the University of Pittsburgh will lead in designing, conducting, and analyzing data from online negotiation experiments. Observing interactive negotiation is necessary to capture the processes to analyze and understand the dynamics of cooperation and negotiation and tipping points that could lead to beneficial or disastrous effects.